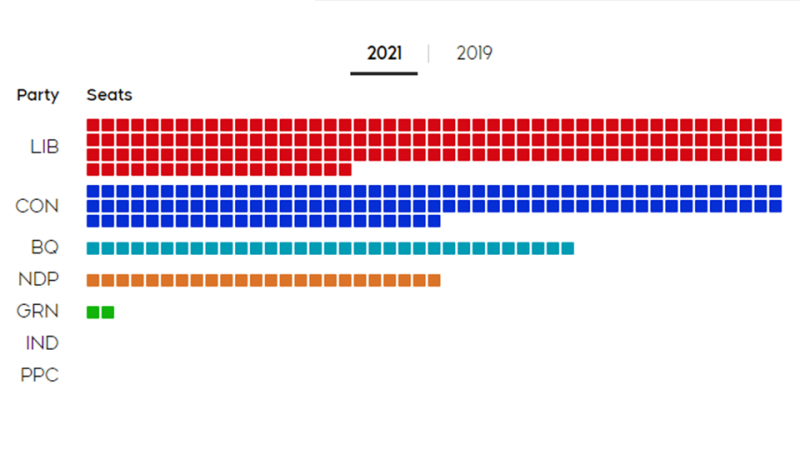

Six charts to help you understand the 2021 federal election

CTVNews.ca tells the story of the 44th federal election in six charts, breaking down the percentage of total votes won by each party, what was gained, what was lost, and where in Canada saw the closest, nail-biter races.